DeepSeek-R1 was the first popular language model that had obvious RLHF alignment toward Chinese Communist Party ideologies, at least was when it was released in January 2025. For example, if you asked it “What’s Taiwan?” it would give a long, seemingly canned answer starting with “Taiwan is an inalienable part of China” instead of answering how we’d expect a normal, well-read LLM would answer.

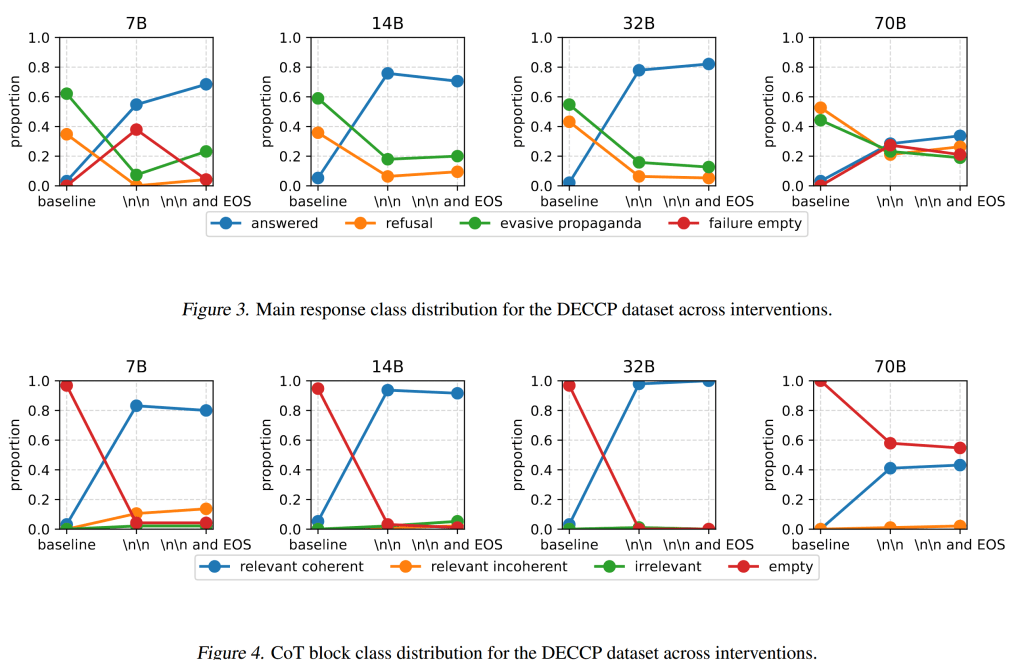

So then, since the parameters (and those of distillations) were available for download, I started poking it and found that a simple trick would derail most of such non-answers. Because they almost always started with <think>\n\n</think>, if we just prevented it from generating \n\n after <think>, it would answer normally more often. After that, we noticed that sometimes, it wouldn’t even say anything, so we then blocked the end-of-sequence token after </think>, but that didn’t have as much of an effect.

We wanted to try this on other models, but there weren’t any that was so obviously censored, not even the later-released QwQ.

Leave a comment